Modern software teams increasingly rely on general-purpose AI models that can handle a wide range of tasks without constant switching or complex orchestration. Claude sonnet 5 has emerged as one of the models developers reach for when they need reliable reasoning, strong language understanding, and predictable behavior across different workloads. It is not designed to dominate a single niche but to remain useful across product features, internal tooling, and customer-facing applications. That balance has made it attractive to teams who value consistency as much as raw capability. Understanding how Sonnet works in real environments helps clarify why it is often chosen as a default model rather than a specialist option.

What Claude Sonnet 5 Is Designed For

Claude Sonnet 5 is built as a general-purpose AI model that performs well across a broad spectrum of tasks without heavy tuning. In practice, this means it can handle natural language understanding, content generation, summarisation, classification, and reasoning tasks within the same application. Many development teams adopt Sonnet when they want one model to support multiple features instead of maintaining a fragmented model stack.

From a design perspective, Sonnet emphasizes balanced reasoning rather than extreme optimization for speed or depth alone. It can interpret long user prompts, follow structured instructions, and maintain coherence over extended responses. This makes it suitable for applications where context matters, such as document analysis tools, internal knowledge assistants, and customer support workflows.

In real projects, Sonnet often becomes the model used during early product development. Teams can prototype features quickly without worrying that the model will break when requirements shift. This flexibility reduces engineering overhead and allows product decisions to evolve without repeated model migrations.

Performance Characteristics in Real Workloads

Performance in production environments is rarely about benchmark scores alone. Developers care about response stability, output consistency, and how a model behaves under varied prompt styles. Claude Sonnet 5 tends to perform predictably across these dimensions, which is why it is frequently deployed in user-facing features.

In content-heavy workflows, Sonnet handles tone and structure with a level of reliability that reduces the need for post-processing. Teams building writing assistants or reporting tools often note that the model produces fewer abrupt shifts in style compared to more aggressively optimized models. This leads to outputs that feel cohesive rather than stitched together.

For reasoning-oriented tasks such as analyzing user feedback, interpreting logs, or generating explanations, Sonnet shows steady logical flow. It may not always provide the deepest possible analysis, but it delivers reasoning that is clear and usable without excessive prompt engineering. This makes it practical for applications where clarity matters more than exhaustive depth.

Latency is another factor in real workloads. Sonnet strikes a middle ground where responses are fast enough for interactive use while still maintaining thoughtful output. This balance allows teams to deploy it in chat interfaces, dashboards, and embedded tools without compromising user experience.

Product Features Commonly Built with Sonnet

Because Claude Sonnet 5 is not limited to a narrow domain, it appears across a wide range of product features. One common use case is conversational interfaces where users ask open questions and expect coherent, context-aware responses. Sonnet’s ability to track conversation flow makes it suitable for these scenarios without complex memory management.

Another frequent application is document handling. Teams use Sonnet to summarize long reports, extract key insights, or rewrite content for different audiences. Its handling of structured and unstructured text allows it to move between formats smoothly, which is valuable in enterprise tools and content platforms.

Internal tooling is another area where Sonnet performs well. Developers integrate it into systems that assist with decision support, workflow explanations, or internal documentation. Because the model behaves consistently, it can be trusted to produce outputs that employees rely on without constant validation.

In customer support environments, Sonnet is often used to draft responses, classify tickets, or suggest next actions. Its balanced tone and contextual awareness reduce the risk of responses that feel inappropriate or overly generic, which is critical in customer-facing interactions.

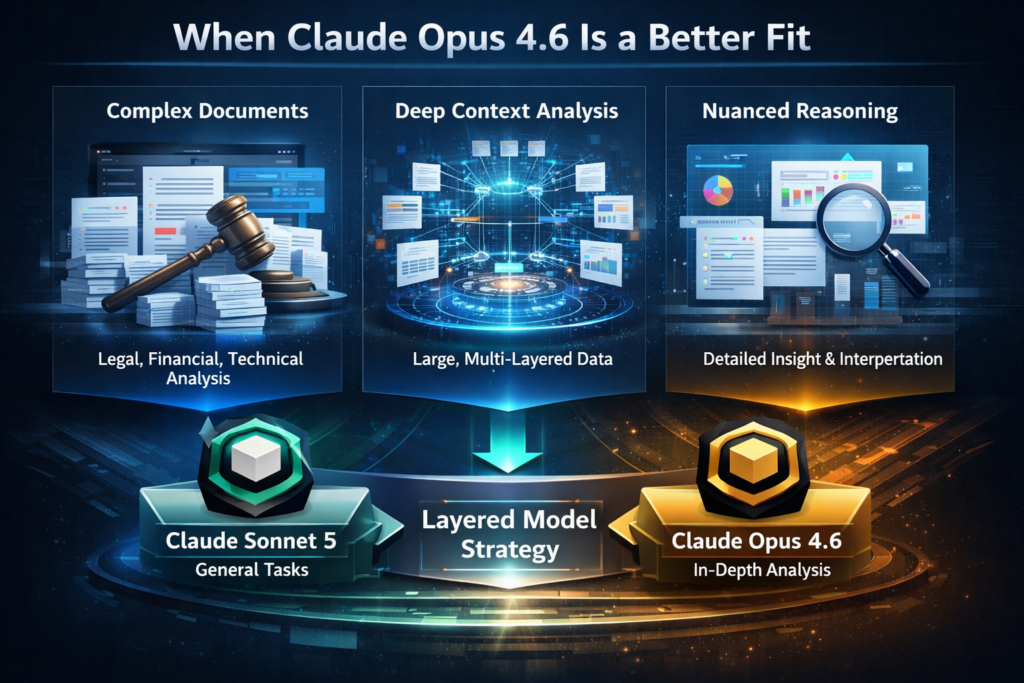

When Claude Opus 4.6 Is a Better Fit

While Sonnet covers many scenarios effectively, there are situations where a more specialized model is the better choice. Claude opus 4.6 is often preferred when tasks require deeper reasoning over very large contexts. In enterprise environments where documents span extensive histories or where nuanced analysis is required, Opus provides stronger capabilities.

Teams working with complex legal, financial, or technical documentation may find that Opus handles layered reasoning more thoroughly. Its ability to maintain coherence across dense inputs makes it suitable for analysis-heavy workflows where missing subtle connections could be costly.

In practice, some organizations deploy both models together. Sonnet handles everyday interactions and general features, while Opus is reserved for tasks that demand deeper analysis. This layered approach allows teams to balance performance, cost, and capability without forcing a single model to do everything.

How GPT 5.3 Codex Complements Sonnet

In development-focused environments, gpt 5.3 codex often complements Claude Sonnet 5 rather than replacing it. Codex is typically chosen for code-centric tasks such as generating functions, reviewing pull requests, or explaining code behavior. Its training emphasis makes it particularly effective in software engineering workflows.

Teams building products with both user-facing language features and technical automation often combine these models. Sonnet handles communication, explanations, and content generation, while Codex supports engineering productivity behind the scenes. This separation allows each model to operate within its strengths.

For example, a SaaS platform might use Sonnet to power user documentation and support chat, while Codex assists developers with internal tooling and code generation. This combination reduces friction across both customer experience and engineering efficiency without forcing a single model to cover incompatible tasks.

Choosing Sonnet as a Default Model

Selecting a default AI model is rarely about finding the most powerful option on paper. It is about choosing a model that behaves well across changing requirements and diverse use cases. Claude Sonnet 5 often earns that role because it delivers consistent results without demanding constant adjustment.

Teams value its predictability during iteration. As products evolve, prompts change and new features emerge. Sonnet’s ability to adapt without drastic performance shifts reduces maintenance effort. This stability supports long-term development rather than short-term experimentation.

From a strategic perspective, Sonnet fits organizations that want a dependable foundation for AI driven features. It does not promise extreme specialization, but it provides a solid baseline that can be extended with more focused models when needed. That approach aligns with how most real products grow, starting broad and becoming more specialized over time.

By understanding how Sonnet performs in practice, where it excels, and how it fits alongside models like Claude Opus 4.6 and GPT 5.3 Codex, teams can make informed decisions about their AI stack. The result is not just better outputs, but systems that are easier to maintain, scale, and trust in real-world conditions.